11 Random Forests

This page is a work in progress and minor changes will be made over time.

Random forests are a composite (or ensemble) algorithm built by fitting many simpler component models, decision trees, and then averaging their predictions. Owing to their built-in variable importance measures, random forests are commonly used in high-dimensional settings when the number of variables far exceeds the number of observations (rows). High-dimensional datasets are common in survival analysis, especially when considering omics, genetic, and financial data. It is therefore no surprise that random survival forests remain a popular and well-performing model in the survival setting, both for predictive modeling and pattern recognition.

11.1 Random Forests for Regression

Training of decision trees can include a large number of hyper-parameters and different training steps including ‘growing’ and subsequently ‘pruning’. However, when utilized in random forests, many of these parameters and steps can be safely ignored, hence this section only focuses on the components that primarily influence the resulting random forest. This section will start by discussing decision trees and will then introduce the bagging algorithm used to create random forests.

11.1.1 Decision Trees

Decision trees are a (relatively) simple machine learning model that are comparatively easy to implement in software and are highly interpretable. The decision tree algorithm takes an input, a dataset, selects a variable that is used to partition the data according to some splitting rule into distinct non-overlapping datasets or nodes or leaves, and repeats this step for the resulting partitions, or branches, until some criterion has been reached. The final nodes are referred to as terminal nodes.

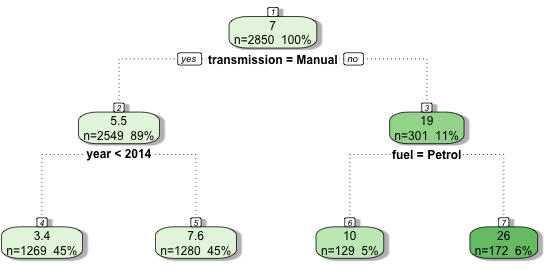

By example, (Figure 11.1) demonstrates a decision tree predicting the price that a car sells for in India (price in thousands of dollars). The dataset includes as variables the registration year, kilometers driven, fuel type (petrol or automatic), seller type (individual or dealer), transmission type (manual or automatic), and number of owners. The decision tree was trained with a maximum depth of 2 (the number of rows excluding the top), and it can be seen that with this restriction only the transmission type, registration year, and fuel type were selected variables. During training, the algorithm identified that the first optimal variable to split the data was transmission type, partitioning the data into manual and automatic cars. Manual cars are further subset by registration year whereas automatic cars are split by fuel type. It can also be seen how the average sale price (top value in each leaf) diverges between leaves as the tree splits – the average sale prices in the final leaves are the terminal node predictions.

The graphic highlights several core features of decision trees:

- They can model non-linear and interaction effects: The hierarchical structure allows for complex interactions between variables with some variables being used to separate all observations (transmission) and others only applied to subsets (year and fuel);

- They are highly interpretable: it is easy to visualise the tree and see how predictions are made;

- They perform variable selection: not all variables were used to train the model.

To understand how random forests work, it is worth looking a bit more into the most important components of decision trees: splitting rules, stopping rules, and terminal node predictions.

Splitting and Stopping Rules

Precisely how the data partitions/splits are derived and which variables are utilised is determined by the splitting rule. The goal in each partition is to find two resulting leaves/nodes that have the greatest difference between them and thus the maximal homogeneity within each leaf, hence with each split, the data in each node should become increasingly similar. The splitting rule provides a way to measure the homogeneity within the resulting nodes. In regression, the most common splitting rule is to select a variable and cut-off (a threshold on the variable at which to separate observations) that minimises the mean squared error in the two potential resulting leaves.

For all decision tree and random forest algorithms going forward, let \(L \subseteq \{1,\ldots,n\}\) denote the set of observation indices in a leaf, with mean outcome \(\bar{y}_L = \frac{1}{|L|}\sum_{i \in L} y_i\).

Let \(j = 1,\ldots,p\) index the features and let \(c_j\) be a possible cutoff value for feature \(j\). Define

\[ \begin{split} L^l(j,c_j) := \{i : x_{i;j} < c_j\} \\ L^r(j,c_j) := \{i : x_{i;j} \geq c_j\} \end{split} \]

as the two leaves resulting from partitioning feature \(j\) at cutoff \(c_j\). To simplify notation, let \(L^l, L^r\) denote \(L^l(j,c_j)\) and \(L^r(j,c_j)\) respectively. A split is then determined by the arguments \((j^*, c^*_{j^*})\) minimizing the residual sum of squares across both leaves (James et al. 2013),

\[ (j^*, c_{j^*}^*) = \mathop{\mathrm{arg\,min}}_{j, c_j} \sum_{i \in L^l} (y_i - \bar{y}_{L^l})^2 + \sum_{i \in L^r} (y_i - \bar{y}_{L^r})^2. \tag{11.1}\]

This method is repeated from the first leaf to the last such that observations are included in a given leaf \(L\) if they satisfy all conditions from all previous branches (splits); features may be considered multiple times in the growing process allowing complex interaction effects to be captured.

Leaves are repeatedly split until a stopping rule has been triggered – a criterion that tells the algorithm to stop partitioning data. The stopping rule is usually a condition on the number of observations in each leaf such that leaves will continue to be split until some minimum number of observations has been reached in a leaf. Other conditions may be on the depth of the tree (as in Figure 11.1 which is restricted to a maximum depth of 2), which corresponds to the number of levels of splitting. Stopping rules are often used together, for example by setting a maximum tree depth and determining a minimum number of observations per leaf. Deciding the number of minimum observations and/or the maximum depth can be performed with automated hyper-parameter optimisation.

Terminal Node Predictions

The final major component of decision trees are terminal node predictions. As the name suggests, this is the part of the algorithm that determines how to actually make a prediction for a new observation. A prediction is made by ‘dropping’ the new data ‘down’ the tree according to the optimal splits that were found during training. The resulting prediction is then a simple baseline statistic computed from the training data that fell into the corresponding node. In regression, this is commonly the sample mean of the training outcome data.

Returning to Figure 11.1, say a new data point is {transmission = Manual, fuel = Diesel, year = 2015}, then in the first split the left branch is taken as ‘transmission = Manual’, in the second split the right branch is taken as ‘year’ \(= 2015 \geq 2014\), hence the new data point lands in the second terminal leaf and is predicted to sell for $7,600. The ‘fuel’ variable is ignored as it is only considered for automatic vehicles. As the final predictions are simple statistics based on training data, all potential predictions can be saved in the original trained model and no complex computations are required in the prediction algorithm.

11.1.2 Random Forests

Decision trees often overfit the training data, hence they have high variance, perform poorly on new data, and are not robust to even small changes in the original training data. Moreover, important variables can end up being ignored as only subsets of dominant variables are selected for splitting.

To counter these problems, random forests are designed to improve prediction accuracy and decrease variance. Random forests utilise bootstrap aggregation, or bagging (Breiman 1996), to aggregate many decision trees. Bagging is a relatively simple algorithm, as follows:

- For \(b = 1,\ldots,B\):

- \(D_b \gets \text{ Randomly sample with replacement } \mathcal{D}_{train}\)

- \(\hat{g}_b \gets \text{ Train a decision tree on } D_b\)

- end For

- return \(\{\hat{g}_b\}^B_{b=1}\)

Step 2 is known as bootstrapping, which is the process of sampling a dataset with replacement; in contrast to more standard subsampling where data is sampled without replacement. Commonly, the bootstrapped sample size is the same as the original. However, as the same value may be sampled multiple times, on average the resulting data only contains around 63.2% unique observations (Efron and Tibshirani 1997). Randomness is further injected to decorrelate the trees by randomly subsetting the candidates of features to consider at each split of a tree. Therefore, every split of every tree may consider a different subset of variables. This process is repeated for \(B\) trees, with the final output being a collection of trained decision trees.

Prediction from a random forest for new data \(\mathbf{x}^*\) follows by making predictions from the individual trees and aggregating the results by some function \(\sigma\), which is usually the sample mean for regression:

\[ \hat{g}(\mathbf{x}^*) = \sigma(\hat{g}_1(\mathbf{x}^*),...,\hat{g}_B(\mathbf{x}^*)) = \frac{1}{B} \sum^B_{b=1} \hat{g}_b(\mathbf{x}^*) \]

where \(\hat{g}_b(\mathbf{x}^*)\) is the prediction from the \(b\)th tree for some new data \(\mathbf{x}^*\) and \(B\) are the total number of grown trees.

As discussed above, individual decision trees result in predictions with high variance that are not robust to small changes in the underlying data. Random forests decrease this variance by aggregating predictions over a large sample of decorrelated trees, where a high degree of difference between trees is promoted through the use of bootstrapped samples and random candidate feature selection at each split.

Usually many (hundreds or thousands) trees are grown, which makes random forests robust to changes in data and ‘confident’ about individual predictions. Other advantages include having tunable and meaningful hyper-parameters, including: the number of variables to consider for a single tree, the splitting rule, and the stopping rule. Random forests treat trees as weak learners, which are not intended to be individually optimized. Instead, each tree captures a small amount of information about the data, which are combined to form a powerful representation of the dataset.

Whilst random forests are considered a ‘black-box’, in that one cannot be reasonably expected to inspect thousands of individual trees, variable importance can still be aggregated across trees, for example by counting the frequency a variable was selected across trees, calculating the minimal depth at which a variable was used for splitting, or via permutation based feature importance. Hence the model remains more interpretable than many alternative methods. Finally, random forests are less prone to overfitting and this can be relatively easily controlled by using early-stopping methods, for example by continually growing trees until the performance of the model stops improving.

11.2 Random Survival Forests

Unlike other machine learning methods that may require complex changes to underlying algorithms, random forests can be relatively simply adapted to random survival forests by updating the splitting rules and terminal node predictions to those that can handle censoring and can make survival predictions. This chapter is therefore focused on outlining different choices of splitting rules and terminal node predictions, which can then be flexibly combined into different models.

11.2.1 Splitting Rules

Survival trees and RSFs have been studied for the past four decades and whilst there are many possible splitting rules (Bou-Hamad et al. 2011), only two broad classes are commonly utilized (as judged by number of available implementations, e.g., Pölsterl 2020; Wright and Ziegler 2017; Ishwaran et al. 2011). The first class rely on the log-rank test to maximize dissimilarity between splits, the second class utilizes likelihood-based measures. The first is discussed in more detail as this is common in practice and is relatively straightforward to implement and understand, moreover it has been demonstrated to outperform other splitting rules (Bou-Hamad et al. 2011). Likelihood rules are more complex and require assumptions that may not be realistic, these are discussed briefly.

Log-rank test

The log-rank test statistic has been widely utilized as a splitting-rule for survival analysis (Ciampi et al. 1986; Ishwaran et al. 2008; LeBlanc and Crowley 1993; Segal 1988). The log-rank test compares the survival distributions of two groups under the null-hypothesis that both groups have the same underlying risk of (immediate) events, with the hazard function used to compare underlying risk.

Let \(L^l\) and \(L^r\) be two leaves, and as before \(i \in L\) indexes the observations in leaf \(L\). Define:

- \(n_\tau^l\), the number of observations at risk at \(\tau\) in leaf \(l\)

\[ n_\tau^l = \sum_{i \in L^l} \mathbb{I}(t_i \geq \tau) \]

- \(o^l_{\tau}\), the observed number of events in leaf \(l\) at \(\tau\)

\[ o^l_{\tau} = \sum_{i \in L^l} \mathbb{I}(t_i = \tau, \delta_i = 1) \]

- \(n_\tau = n_\tau^l + n_\tau^r\), the number of observations at risk at \(\tau\) in both leaves

- \(o_\tau = o^l_{\tau} + o^r_{\tau}\), the observed number of events at \(\tau\) in both leaves

Recall \(t_{(k)}\) is the \(k\)th ordered event time. The log-rank test evaluates if hazard functions are equal in both leaves, \(H_0: h^l = h^r\), with test statistic (Segal 1988),

\[ LR(L^l) = \frac{\sum_{k=1}^m (o^l_{t_{(k)}} - e^l_{t_{(k)}})}{\sqrt{\sum_{k=1}^m v_{t_{(k)}}^l}} \]

where:

- \(e^l_{\tau}\) is the expected number of events in leaf \(l\) at \(\tau\)

\[ e^l_{\tau} := \frac{n_\tau^l o_\tau}{n_\tau} \]

- \(v^l_\tau\) is the variance of the number of events in leaf \(l\) at \(\tau\)

\[ v^l_{\tau} := e^l_{\tau} \Big(\frac{n_\tau - o_\tau}{n_\tau}\Big)\Big(\frac{n_\tau - n^l_\tau}{n_\tau - 1}\Big) \]

These results follow from the assumption that, under equal hazards in both leaves, the number of events at each unique event time follows a Hypergeometric distribution. The same statistic results if \(L^r\) is instead considered.

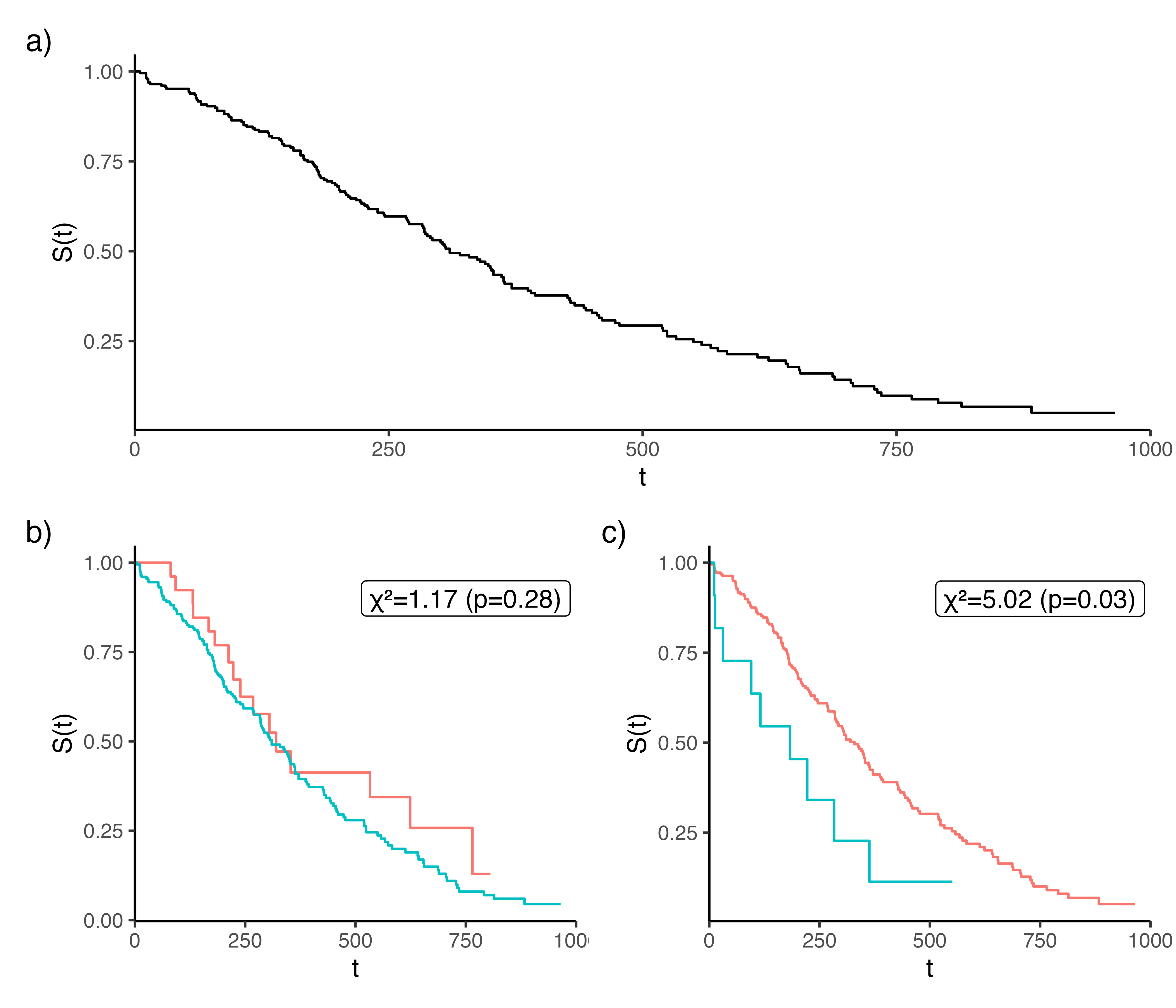

The higher the log-rank statistic, the greater the dissimilarity between the two groups (Figure 11.2), thereby making it a sensible splitting rule for survival, moreover it has been shown that it works well for splitting censored data (LeBlanc and Crowley 1993). Additionally, the log-rank test requires no knowledge about the shape of the survival curves or distribution of the outcomes in either group (Bland and Altman 2004), making it ideal for an automated process that requires no user intervention.

lung dataset from the \(\textsf{R}\) package survival. (b-c) is the same data stratified according to whether ‘age’ is greater or less than 50 (panel b) or 75 (panel c). The higher \(\chi^2\) statistic (panel c) results in a lower \(p\)-value and a greater difference between the stratified Kaplan-Meier curves. Hence splitting age at 75 results in a greater dissimilarity between the resulting branches and thus makes a better choice for splitting the variable.

The log-rank score rule (Hothorn and Lausen 2003) is a standardized version of the log-rank rule that could be considered as a splitting rule, though simulation studies have not demonstrated significant improvements in predictive performance when comparing the two (Ishwaran et al. 2008). Alternative dissimiliarity measures and tests have also been suggested as splitting rules, including modified Kolmogorov-Smirnov test and Gehan-Wilcoxon tests (Ciampi et al. 1988). Simulation studies have demonstrated that both of these may have higher power and produce ‘better’ results than the log-rank statistic (Fleming et al. 1980), however neither appears to be commonly used.

In a competing risk setting, either the per-cause log-rank test or Gray’s test (Gray 1988) can be used. The former computes the weighted difference between cause-specific Nelson-Aalen estimates in the two leaves; the latter compares cumulative incidence functions (Section 11.2.3) and hence might be preferred as the CIF is often the prediction of interest in competing risks settings (Ishwaran et al. 2014b).

Alternative Splitting Rules

A splitting rule is simply a way to measure if performance increases after data splitting. Therefore, there are many statistics that could be used for this purpose, including many of the metrics referenced in Part II (Ishwaran and Kogalur 2018; Gordon and Olshen 1985; Schmid et al. 2016). Like many other aspects of modelling, experiments have shown that the choice of splitting rules is data dependent (Schmid et al. 2016). When using loss-based splitting rules, the most sensible approach is to align the splitting rule with the prediction problem. For example, if the goal is to maximize separation, then a log-rank splitting rule to maximize homogeneity in terminal nodes is a natural starting point. Whereas if the goal is to accurately rank observations, then a concordance splitting rule may be optimal (Section 6.1).

An alternative to hypothesis testing and loss-based rules is to instead use likelihood ratio, or deviance, statistics (LeBlanc and Crowley 1992). The likelihood-ratio statistic can be used as a splitting rule to test if the model fit to the data is improved or worsened with each split. This approach can be tricky as one must assume a parametric form for the data and select the likelihood (Section 3.5.1) that best fits this form, however it does allow for taking into account different forms of censoring and truncation. Theoretically likelihood splitting rules could lead to a model that is a better fit for the data. However, the premise of random forests is that they are built on weak learners, so optimizing for a close fit in each individual decision tree may be unnecessary. Moreover, likelihood methods are implemented in few off-shelf software packages and there is limited research formally comparing them to other splitting rules.

11.2.2 Terminal Node Prediction

As in the regression setting, the usual strategy for predictions is to create a simple estimate based on the training data that lands in the terminal nodes. However, as seen throughout this book, the choice of estimator in the survival setting depends on the prediction task of interest, which are now considered in turn. First, note that all terminal node predictions can only yield useful results if there are a sufficient number of uncensored observations in each terminal node. Hence, a common RSF stopping rule is the minimum number of uncensored observations per leaf, meaning a leaf is not split if that would result in too few uncensored observations in the resulting leaves.

Probabilistic Predictions

Starting with the most common survival prediction type, the algorithm requires a simple estimate for the underlying survival distribution in each of the terminal nodes, which can be estimated using the Kaplan-Meier or Nelson-Aalen methods (Hothorn et al. 2004; Ishwaran et al. 2008; LeBlanc and Crowley 1993; Segal 1988).

Let \(b \in \{1,\ldots,B\}\) index the trees, let \(L_b(\mathbf{x}^*)\) denote the terminal node in tree \(b\) that observation \(\mathbf{x}^*\) falls into, and let \(t^*_{(1)} < \ldots < t^*_{(m)}\) be the ordered event times within \(L_b(\mathbf{x}^*)\). Then the predicted survival function and cumulative hazard for \(\mathbf{x}^*\) are,

\[ \hat{S}_{b}(\tau|\mathbf{x}^*) = \prod_{k:t^*_{(k)} \leq \tau} \left(1-\frac{d_{t^*_{(k)}}}{n_{t^*_{(k)}}}\right) \tag{11.2}\]

\[ \hat{H}_{b}(\tau|\mathbf{x}^*) = \sum_{k:t^*_{(k)} \leq \tau} \frac{d_{t^*_{(k)}}}{n_{t^*_{(k)}}} \tag{11.3}\]

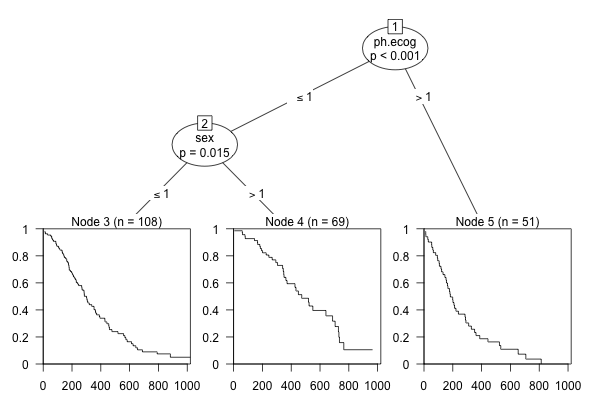

where \(d_{t^*_{(k)}}\) and \(n_{t^*_{(k)}}\) are the observed number of events and the number of observations at risk at \(t^*_{(k)}\), both computed from observations in \(L_b(\mathbf{x}^*)\). See Figure 11.3 for an example using the lung dataset (Therneau 2015).

lung dataset from the \(\textsf{R}\) package survival. The terminal node predictions are survival curves.

The bootstrapped prediction is the cumulative hazard function or survival function averaged over individual trees. Note that understanding what these bootstrapped functions represents depends on how they are calculated. By definition, a mixture of \(n\) distributions with cumulative distribution functions \(F_i, i = 1,...,n\) is given by

\[ F(x) = \sum^n_{i=1} w_i F_i(x) \]

Substituting \(F = 1 - S\) and noting \(\sum w_i = 1\) gives the computation \(S(x) = \sum^n_{i=1} w_i S_i(x)\), allowing the bootstrapped survival function to exactly represent the mixture distribution averaged over all trees:

\[ \hat{S}_{Boot}(\tau|\mathbf{x}^*) = \sum^B_{b=1} w_b\hat{S}_b(\tau|\mathbf{x}^*) \tag{11.4}\]

usually with \(w_b = 1/B\) where \(B\) is the number of trees. Equivalently, for the cumulative hazard,

\[ \hat{H}_{Boot}(\tau|\mathbf{x}^*) = \sum^B_{b=1} w_b\hat{H}_b(\tau|\mathbf{x}^*), \tag{11.5}\]

where again the Nelson-Aalen estimator should be used instead of transformation from the survival function to avoid \(-log(0)\) errors.

In other censoring and truncation settings, the estimator in the final node can be replaced by the nonparametric maximum likelihood estimator or left-truncated risk set (Section 3.5.2).

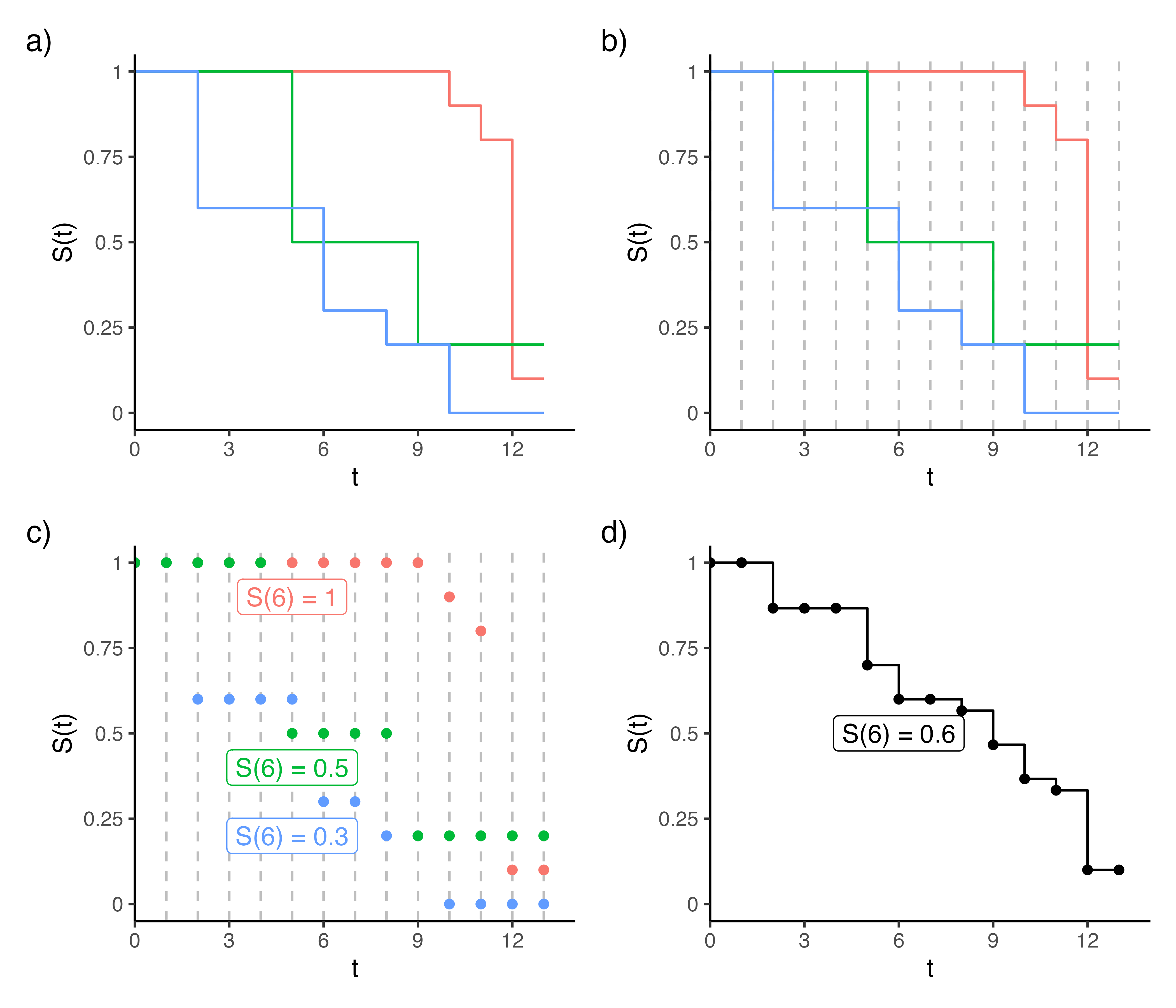

Another practical consideration is how to average the survival probabilities over the decision trees as each individual Kaplan-Meier estimate may be defined on different time points. This is overcome by recognizing that the Kaplan-Meier estimator is a step function: between event times, the survival probability is constant and equal to the last observed value. Figure 11.4 demonstrates this process for three decision trees, where panel (a) shows the estimates within three terminal nodes (red, green, blue). The aggregated survival probability is calculated at event times points across terminal nodes (dashed lines in panels b-c), and the average is computed by evaluating each step function at those time points and averaging (panel d).

11.2.3 Competing Risks

The RSF framework extends to competing risks (Section 4.2) in a natural way, replacing the single-event Kaplan-Meier and Nelson-Aalen estimators in the terminal nodes with the Aalen-Johansen CIF estimator (Section 4.2.2; Ishwaran et al. (2014a)). The key insight is that both the splitting rule and the terminal node estimator must be adapted to account for the presence of multiple event types.

Splitting Rules

The standard log-rank test compares cause-specific hazards across candidate splits, which is not the quantity of primary interest in the competing risks setting. Two adaptations have been proposed (Ishwaran et al. 2014a).

The first uses Gray’s test (Gray 1988), which tests \(H_0: F_e^l = F_e^r\), i.e. equal CIFs in the two candidate daughter nodes. Gray’s test targets the subdistribution hazard \(\tilde{h}_e(\tau) = -\frac{d}{d\tau}\log(1 - F_e(\tau))\), the hazard of a pseudo-population that keeps competing-event subjects permanently in the risk set. This is operationalized by augmenting the standard risk set with observations who already experienced a competing event: \[ \tilde{n}_{e,t_{(k)}} = n_{t_{(k)}} + \sum_{j:\, t_{(j)} < t_{(k)}} \sum_{e' \neq e} d_{e',t_{(j)}}, \] reflecting the assumption that, had those individuals not experienced a competing event, they would remain at risk of event \(e\). The test statistic is then computed analogously to the log-rank statistic but using \(\tilde{n}_{e,t_{(k)}}\) in place of \(n_{t_{(k)}}\), and aggregating over all causes.

The second approach computes a single weighted statistic by combining cause-specific log-rank contributions across all causes. For each cause \(e\), competing events of other causes are treated as censored, so the at-risk set is the standard one, and the cause-specific log-rank statistic \(LR_e(L^l)\) is computed exactly as in \(LR(L^l)\) above, with \(o^l_{e,t_{(k)}}\) and \(e^l_{e,t_{(k)}}\) denoting the observed and expected cause-\(e\) events in \(L^l\) at \(t_{(k)}\). These per-cause statistics are then combined into a single weighted statistic, \[ LR_{CR}(L^l) = \frac{\left| \sum_{e=1}^q w_e \sum_{k} (o^l_{e,t_{(k)}} - e^l_{e,t_{(k)}}) \right|}{\sqrt{\sum_{e=1}^q w_e^2 \sum_{k} v^l_{e,t_{(k)}}}}, \] where the inner sums are over all event times \(t_{(k)}\) in the node being split, \(v^l_{e,t_{(k)}}\) is the hypergeometric variance for cause \(e\) at \(t_{(k)}\), and \(w_e\) are cause weights (default \(w_e = 1/q\)). Setting \(w_e = 1\) for one cause and \(w_{e'} = 0\) otherwise reduces this to a standard single-cause log-rank test, making splits focus entirely on separating that cause’s hazard.

Terminal Node Estimator

Within each terminal node \(L_b(\mathbf{x}^*)\), the Aalen-Johansen estimator is used to estimate the CIF for each cause \(e \in \{1, \ldots, q\}\). Let \(t^*_{(1)} < \ldots < t^*_{(m)}\) be the ordered event times (of any cause) within \(L_b(\mathbf{x}^*)\), and let \(d_{e,t^*_{(k)}}\) and \(n_{t^*_{(k)}}\) denote the number of cause-\(e\) events and the number at risk at \(t^*_{(k)}\), both computed from observations in \(L_b(\mathbf{x}^*)\). The cause-specific discrete hazard (4.10) within the node is \(\hat{h}^d_{e,b}(t^*_{(k)}) = d_{e,t^*_{(k)}} / n_{t^*_{(k)}}\), the all-cause survival in the node is \[ \hat{S}_b(\tau|\mathbf{x}^*) = \prod_{k: t^*_{(k)} \leq \tau} \left(1 - \sum_{e=1}^q \hat{h}^d_{e,b}(t^*_{(k)})\right), \] and the CIF for cause \(e\) is the discrete Aalen-Johansen estimator (4.11) \[ \hat{F}_{e,b}(\tau|\mathbf{x}^*) = \sum_{k: t^*_{(k)} \leq \tau} \hat{S}_b(t^*_{(k-1)}|\mathbf{x}^*) \cdot \hat{h}^d_{e,b}(t^*_{(k)}), \tag{11.6}\] where \(t^*_{(0)} = 0\) so \(\hat{S}_b(t^*_{(0)}|\mathbf{x}^*) = 1\), accumulating weighted cause-specific hazard increments over time (cf. 4.9).

Ensemble CIF

The ensemble CIF is the weighted average of the per-tree Aalen-Johansen estimates: \[ \hat{F}_{e,Boot}(\tau|\mathbf{x}^*) = \sum_{b=1}^B w_b\hat{F}_{e,b}(\tau|\mathbf{x}^*). \tag{11.7}\]

As with the single-event case (11.4), averaging the CIF directly is justified because the CIF is itself a probability and the ensemble represents a mixture of the per-tree distributions. The constraint \(\sum_{e=1}^q \hat{F}_{e,Boot}(\tau|\mathbf{x}^*) \leq 1\) is preserved, since it holds for each tree’s Aalen-Johansen estimate and is maintained under convex combination.

Deterministic Predictions

As discussed in Chapter 9, predicting and evaluating survival times is a complex and fairly under-researched area. For RSFs, there is an inclination to estimate survival times based on the mean or median survival times of observations in terminal nodes, however this would lead to biased estimations. Therefore, research has tended to focus on relative risk predictions.

As discussed, relative risks are arbitrary values where only the resulting rank matters when comparing observations. In RSFs, each terminal node should be as homogeneous as possible, hence within a terminal node, the risk between observations should be the same. The most common method to estimate average risk appears to be a transformation from the Nelson-Aalen method (Ishwaran et al. 2008), which exploits results from counting process to provide a measure of expected mortality (Hosmer Jr et al. 2011) – the same result is used in the event frequency calibration measure discussed in Section 7.1.2. Given new data \(\mathbf{x}^*\) falling into terminal node \(L_b(\mathbf{x}^*)\), the relative risk prediction is the sum of the predicted cumulative hazard \(\hat{H}_b(\cdot|\mathbf{x}^*)\) (computed with the Nelson-Aalen estimator) evaluated at the observed outcome time of each observation \(i \in L_b(\mathbf{x}^*)\):

\[ \phi_b(\mathbf{x}^*) = \sum_{i \in L_b(\mathbf{x}^*)} \hat{H}_b(t_i|\mathbf{x}^*) \]

This is interpreted as the number of expected events in \(L_b(\mathbf{x}^*)\), with the assumption that a terminal node with more expected events represents a higher risk group. The bootstrapped risk prediction is the weighted average over all trees:

\[ \phi_{Boot}(\mathbf{x}^*) = \sum_{b=1}^B w_b\, \phi_b(\mathbf{x}^*). \tag{11.8}\]

More complex methods have also been proposed using likelihood-based splitting rules while assuming a PH model form (Ishwaran et al. 2004; LeBlanc and Crowley 1992), but these do not appear to be in wide-spread usage.

In the competing risks setting Ishwaran et al. (2014b) propose a similar metric using the predicted CIFs:

\[ \phi_{e;b(h)}(\mathbf{x}^*) = \int^{\tau^*}_0 \hat{F}_{e;b(h)}(\tau|\mathbf{x}^*) \ d\tau, \]

where \(\tau^*\) is suggested to be the end of the study period. \(\phi_{e;Boot}(\mathbf{x}^*)\) is computed as in (11.8).